I Know Kung Fu: Load Only the Skills the Mission Needs

Claude Code skills are not plugins. They are not tools. They are knowledge, uploaded at the precise moment it's needed.

Claude Code skills are not plugins. They are not tools. They are knowledge, uploaded at the precise moment it’s needed.

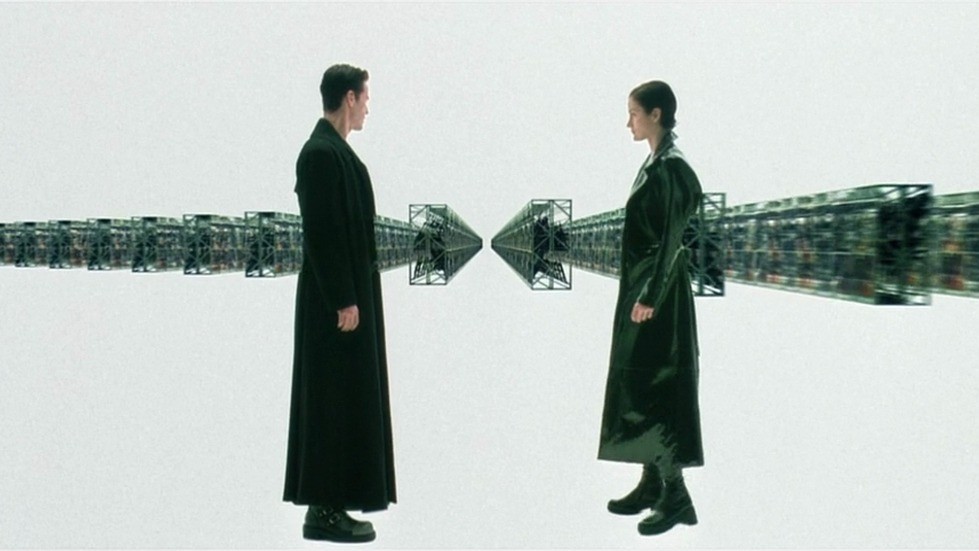

There is a scene in The Matrix that everyone remembers. Neo sits in a chair. Tank, the Operator, loads a program. Neo’s eyes snap open. “I know kung fu.”

No years of practice. No textbooks. Just the precise knowledge, delivered at the precise moment it is needed.

This is what it feels like to give an AI agent a skill.

The Problem With Loading Everything

MCP gave AI agents access to external tools, but at a cost most people overlook: when you hand an agent a set of MCP tools, it doesn’t just receive the tools. It receives descriptions of every tool. Schemas. Parameters. Documentation. The full inventory, loaded upfront, before the agent reads a single word of your actual request.

One example from Anthropic’s own research: an agent consumed 150,000 tokens before it knew what it was being asked to do.

That is loading every martial art, every vehicle manual, every weapon system into Neo’s head simultaneously. The mind doesn’t expand. It drowns. More is not better when more fills the only room where thinking happens.

The Operator’s Chair

Tank doesn’t load everything into Neo’s head at once. He loads what Neo needs, when Neo needs it. Jujitsu? Loaded. Kempo? Loaded. Drunken boxing? Loaded. The point is not that Neo has access to every martial art. The point is that only the relevant martial art occupies his mind at the moment of the fight.

Claude Code skills work the same way. A skill is a Markdown file living in .claude/skills/. Here is what one looks like:

---

description: "Deploy services to staging or production using our internal kubectl workflow"

tags: ["deployment", "infrastructure"]

---## Prerequisites

- Verify the service builds cleanly before deploying

- Check that staging is not locked by another deploy

## Staging Deploy

1. Run `kubectl apply -f manifests/staging/`

2. Wait for rollout: `kubectl rollout status deployment/$SERVICE`

3. Hit the health check: `curl https://staging.internal/$SERVICE/health`

4. Notify #deploys in Slack with the commit SHA

## Production Deploy

...Here is the critical architectural decision: only the description line enters the agent’s context at startup. Not the instructions. Not the scripts. Not the reference files. Just a single sentence, roughly 100 tokens, that tells the agent what the skill does and when to reach for it.

When someone says “deploy the auth service to staging,” the agent recognizes the match, pulls in the full instructions, and executes. If those instructions reference scripts, the agent reads them. If they reference executable code, the agent runs it and receives only the output. The code itself never enters context.

Three levels. Metadata always loaded. Instructions loaded on demand. Resources loaded when needed. Progressive disclosure, applied to an agent’s working memory.

The context window stays clean until the moment it matters. Then Tank loads the program.

Your Kung Fu, Not Kung Fu

Here is where people get confused. A skill does not add capabilities. Claude already knows Python. It already knows SQL, Kubernetes, Terraform, and how to write a bash script. You do not need to teach it kung fu.

What you need to teach it is your kung fu.

Your company’s deployment process. Your database naming conventions. Your staging URLs. Your rollback procedures. The quirk in your CI pipeline’s third step. The connection string format that differs from the documentation nobody reads.

Remember the child bending the spoon? “There is no spoon.” The bottleneck was never the model’s intelligence. It was never compute, parameters, or context length. It was institutional knowledge: the ten thousand small decisions your organization has made that exist nowhere except in the heads of your senior engineers.

Skills close that gap. One hundred tokens in context. The rest loads only when needed. The agent didn’t become smarter. It became yours.

Skills Are Alive

Here is the part that separates skills from static configuration.

A skill is not a rigid script. It gives the agent enough direction to act without dictating every step. Write “check that staging is healthy” and the agent decides how to check. Write “notify the team” and it figures out the channel. The instructions set the corridor; the agent navigates within it. You control how prescriptive or creative the behavior is, skill by skill.

And skills evolve. After a deployment goes wrong at 2am, you update the skill with the new edge case. After a retrospective surfaces a better rollback procedure, you commit the change. The skill file lives in your repository, versioned like code, reviewed like code, improved like code. Every retrospective makes the next mission sharper.

This is the difference between training and documentation. Documentation sits in a wiki and rots. A skill sits in the agent’s training construct and improves with every mission.

Tank’s Console Gets an Upgrade

This week, Anthropic shipped Sonnet 4.6. The numbers matter here because they measure exactly what skills require.

Tool use accuracy (MCP-Atlas) jumped from 43.8% to 61.3%: the agent follows instructions better. Agentic task completion (OSWorld) went from 61.4% to 72.5%: it chains actions more reliably. On real-world knowledge work (GDPval), Sonnet 4.6 scored 1633 Elo, actually higher than Opus 4.6. The workhorse, at one-fifth the cost, outperforming the flagship on the tasks that matter most.

Tank’s upload is faster. Neo opens his eyes sooner. The training construct just got an upgrade.

Monday Morning

Three things you can do with this.

If you run engineering teams: Build skills for your most repeated workflows. The deployment skill. The code review checklist. The incident response runbook. Each one makes every engineer’s Claude session as good as your best engineer’s session. The senior who always remembers the edge case? Encode that knowledge. Make it load on demand. Update it after every retro.

If you evaluate AI tools: Skills are the answer to “but how does it know our process?” Custom GPTs tried this and hit the Chat ceiling: no code execution, no file access, no real integration. MCP tried it and hit the token tax: everything loaded upfront, the agent drowning before it started. Skills thread the needle. Specific knowledge, minimal context cost, full code execution, and they improve over time.

If you set strategy: The moat is not the model. Models improve for everyone. Sonnet 4.6 is available to your competitors too. The moat is the skill library. The company that builds the best internal skills will have agents that operate like tenured engineers from day one. That advantage compounds with every retrospective.

Walking the Path

Morpheus tells Neo: “There is a difference between knowing the path and walking the path.”

The model was always capable of the walk. What it couldn’t do was navigate your path: your conventions, your infrastructure, your particular way of doing things that took years to develop and exists mostly as tribal knowledge.

Skills are the path, made legible.

Tank sits at his console. The program loads. The agent opens its eyes.

It knows your kung fu.